Posted by Modestos

This post was originally in YouMoz, and was promoted to the main blog because it provides great value and interest to our community. The author’s views are entirely his or her own and may not reflect the views of Moz, Inc.

This step-by-step guide aims to help users with the link auditing process relying on own judgment, without blindly relying on automation. Because links are still a very important ranking factor, link audits should be carried out by experienced link auditors rather than third party automated services. A flawed link audit can have detrimental implications.

The guide consists of the following sections:

- How to make sure that your site’s issues are links-related.

- Which common misconceptions you should to avoid when judging the impact of backlinks.

- How to shape a solid link removal strategy.

- How to improve the backlink data collection process.

- Why you need to re-crawl all collected backlink data.

- Why you need to find the genuine URLs of your backlinks.

- How to build a bespoke backlink classification model.

- Why you need to weight and aggregate all negative signals.

- How to prioritise backlinks for removal.

- How to measure success after having removed/disavowed links.

In the process that follows, automation is required only for data collection, crawling and metric gathering purposes.

Disclaimer: The present process is by no means panacea to all link-related issues – feel free to share your thoughts, processes, experiences or questions within the comments section – we can all learn from each other 🙂

#1 Rule out all other possibilities

Nowadays link removals and/or making use of Google’s disavow tool are the first courses of action that come to mind following typical negative events such as ranking drops, traffic loss or de-indexation of one or more key-pages on a website.

However, this

doesn’t necessarily mean that whenever rankings drop or traffic dips links are the sole culprits.

For instance, some of the actual reasons that these events may have occurred can relate to:

-

Tracking issues – Before trying anything else, make sure the reported traffic data are accurate. If traffic appears to be down make sure there aren’t any issues with your analytics tracking. It happens sometimes that the tracking code goes missing from one or more pages for no immediately apparent reason.

-

Content issues – E.g. the content of the site is shallow, scraped or of very low quality, meaning that the site could have been hit by an algorithm (Panda) or by a manual penalty.

-

Technical issues – E.g. a poorly planned or executed site migration, a disallow directive in robots.txt, wrong implementation of rel=”canonical”, severe site performance issues etc.

-

Outbound linking issues – These may arise when a website is linking out to spam sites or websites operating in untrustworthy niches i.e. adult, gambling etc. Linking out to such sites isn’t always deliberate and in many cases, webmasters have no idea where their websites are linking out to. Outbound follow links need to be regularly checked because the hijacking of external links is a very common hacking practice. Equally risky are outbound links pointing to pages that have been redirected to bad neighborhood sites.

-

Hacking – This includes unintentional hosting of spam, malware or viruses that come as a consequence because of hacking.

In all these cases, trying to recover any loss in traffic has nothing to do with the quality of the inbound links as the real reasons are to be found elsewhere.

Remember: There is nothing worse than spending time on link removals when in reality your site is suffering by non-link-related issues.

#2 Avoid common misconceptions

If you have lost rankings or traffic and you can’t spot any of issues presented in previous step, you are left with the possibility of checking out your backlinks.

Nevertheless, you should avoid the falling victim of the following three misconceptions before being reassured that there aren’t any issues with your sites backlinks.

a) It’s not just about Penguin

The problem: Minor algorithm updates take place pretty much every day and not just on the dates Google’s reps announce them, such as the Penguin updates. According to Matt Cutts, in 2012 alone Google launched 665 algorithmic updates, which averages at about two per day during the entire year!

If your site hasn’t gained or lost rankings on the exact dates Penguin was refreshed or other official updates rolled out, this does not mean that your site is immune to all Google updates. In fact, your site may have been hit already by less commonly known updates.

The solution: The best ways to spot unofficial Google updates is by regularly keeping an eye on the various SERP volatility tools as well as on updates from credible forums where many site owners and SEOs whose sites have been hit share their own experiences.

SERPs volatility (credit: SERPs.com)

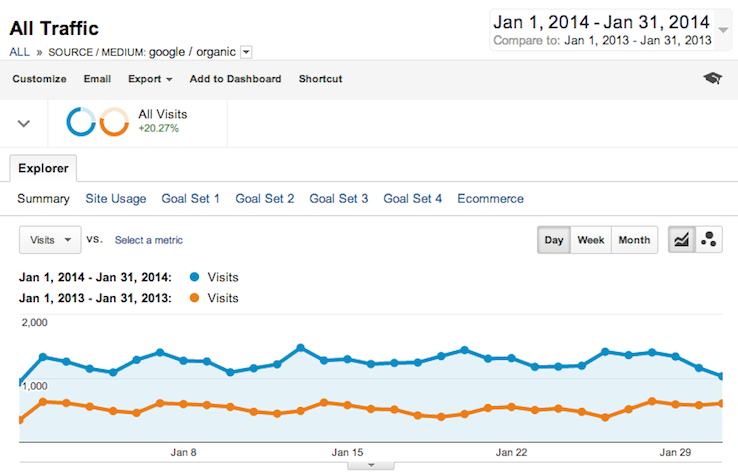

b) Total organic traffic has not dropped

The problem: Even though year-over-year traffic is a great KPI, when it’s not correlated with rankings, many issues may remain invisible. To make things even more complicated, “not provided” makes it almost impossible to break down your organic traffic into brand and non-brand queries.

The solution: Check your rankings regularly (i.e. weekly) so you can easily spot manual penalties or algorithmic devaluations that may be attributed to your site’s link graph. Make sure that you not only track the keywords with the highest search volumes but also other several mid- or even long-tail ones. This will help you diagnose which keyword groups or pages have been affected.

c) It’s not just about the links you have built

The problem: Another common misconception is to assume that because you haven’t built any unnatural links your site’s backlink profile is squeaky-clean. Google evaluates all links pointing to your site, even the ones that were built five or 10 years ago and are still live, which you may or may not be aware of. In a similar fashion, any new links coming into your site do equally matter, whether they’re organic, inorganic, built by you or someone else. Whether you like it or not, every site is accountable and responsible for all inbound links pointing at it.

The solution: First, make sure you’re regularly auditing your links against potential negative SEO attempts. Check out Glen Gabe’s 4 ways of carrying out negative SEO checks and try adopting at least two of them. In addition, carry out a thorough backlink audit to get a better understanding of your site’s backlinks. You may be very surprised finding out which sites have been linking to your site without being aware of it.

#3 Shape a solid link removal strategy

Coming up with a solid strategy should largely depend on whether:

-

You have received a manual penalty.

-

You have lost traffic following an official or unofficial algorithmic update (e.g. Penguin).

-

You want to remove links proactively to mitigate risk.

I have covered thoroughly in

another post the cases where link removals can be worthwhile so let’s move on into the details of each one of the three scenarios.

Manual penalties Vs. Algorithmic devaluations

If you’ve concluded that the ranking drops and/or traffic loss seem to relate to backlink issues, the first thing you need to figure out is whether your site has been hit manually or algorithmically.

Many people confuse manually imposed penalties with algorithmic devaluations, hence making strategic mistakes.

- If you have received a Google notification and/or a manual ‘Impacts Links’ action (like the one below) appears within Webmaster Tools it means that your site has already been flagged for unnatural links and sooner or later it will receive a manual penalty. In this case, you should definitely try to identify which the violating links may be and try to remove them.

- If no site-wide or partial manual actions appear in your Webmaster Tools account, your entire site or just a few pages may have been affected by an official (e.g. Penguin update/refresh) or unofficial algorithmic update in Google’s link valuation. For more information on unofficial updates keep an eye on Moz’s Google update history.

There is also the possibility that a site has been hit manually and algorithmically at the same time, although this is a rather rare case.

Tips for manual penalties

If you’ve received a manual penalty, you’ll need to remove as many unnatural links as possible to please Google’s webspam team when requesting a review. But before you get there, you need to figure out what

type of penalty you have received:

-

Keyword level penalty – Rankings for one or more keywords appear to have dropped significantly.

-

Page (URL) level penalty – The pages no longer ranks for any of its targeted keywords, including head and long-tail ones. In some cases, the affected page may even appear to be de-indexed.

-

Site-wide penalty – The entire site has been de-indexed and consequently no longer ranks for any keywords, including the site’s own domain name.

1. If one (or more) targeted keyword(s) has received a penalty, you should first focus on the backlinks pointing to the page(s) that used to rank for the penalized keyword(s) BEFORE the penalty took place. Carrying out granular audits against the pages of your best ranking competitors can give you a rough idea of how much work you need to do in order to rebalance your backlink profile.

Also, make sure you review all backlinks pointing to URLs that 301 redirect or have a rel=”canonical” to the penalized pages. Penalties can flow in the same way PageRank flows through 301 redirects or rel=”canonical” tags.

2. If one (or more) pages (URLs) have received a penalty, you should definitely focus on the backlinks pointing to these pages first. Although there are no guarantees that resolving the issues with the backlinks of the penalized pages may be enough to lift the penalty, it makes sense not making drastic changes on the backlinks of other parts of the site unless you really have to e.g. after failing a first reconsideration request.

3. If the penalty is site-wide, you should look at all backlinks pointing to the penalized domain or subdomain.

In terms of the process you can follow to manually identify and document the toxic links, Lewis Seller’s excellent

Ultimate Guide to Google Penalty Removal covers pretty much all you need to be doing.

Tips for algorithmic devaluations

Pleasing Google’s algorithm is quite different to pleasing a human reviewer. If you have lost rankings due to an algorithmic update, the first thing you need to do is to carry out a backlink audit against the top 3-4 best-ranking websites in your niche.

It is really important to study the backlink profile of the sites, which are still ranking well, making sure you exclude Exact Match Domains (EMDs) and Partial Match Domains (PMDs).

This will help you spot:

- Unnatural signals when comparing your site’s backlink profile to your best ranking competitors.

- Common trends amongst the best ranking websites.

Once you have done the above you should then be in a much better position to decide which actions you need to take in order to rebalance the site’s backlink profile.

Tips for proactive link removals

Making sure that your site’s backlink profile is in better shape compared to your competitors should always be one of your top priorities, regardless of whether or not you’ve been penalized. Mitigating potential link-related risks that may arise as a result of the next Penguin update, or a future manual review of your site from Google’s webspam team, can help you stay safe.

There is nothing wrong with proactively removing and/or disavowing inorganic links because some of the most notorious links from the past may one day in the future hold you back for an indefinite period of time, or in extreme cases, ruin your entire business.

Removing obsolete low quality links is highly unlikely to cause any ranking drops as Google is already discounting (most of) these unnatural links. However, by not removing them you’re risking getting a manual penalty or getting hit by the next algorithm update.

Undoubtedly, proactively removing links may not be the easiest thing to sell a client. Those in charge of sites that have been penalized in the past are always much more likely to invest in this activity, without any having any hesitations.

Dealing with unrealistic growth expectations it can be easily avoided when honestly educating clients about the current stance of Google towards SEO. Investing on this may save you later from a lot of troubles, avoiding misconceptions or misunderstandings.

A reasonable site owner would rather invest today into minimizing the risks and sacrifice growth for a few months rather than risk the long-term sustainability of their business. Growth is what makes site owners happy, but sustaining what has already been achieved should be their number one priority.

So, if you have doubts about how your client may perceive your suggestion about spending the next few months into re-balancing their site’s backlink profile so it conforms with Google’s

latest quality guidelines, try challenging them with the following questions:

-

How long could you afford running your business without getting any organic traffic from Google?

- What would be the impact to your business if you five best performing keywords stop ranking for six months?

#4 Perfect the data collection process

Contrary to Google’s recommendation, relying on link data from Webmaster Tools alone in most cases isn’t enough, as Google doesn’t provide every piece of link data that is known to them. A great justification for this argument is the fact that many webmasters have received from Google examples of unnatural links that do not appear in the available backlink data in WMT.

Therefore, it makes perfect sense to try combining link data from as many different data sources as possible.

- Try including ALL data from at least one of the services with the biggest indexes (Majestic SEO, Ahrefs) as well as the ones provided by the two major search engines (Google and Bing webmaster tools) for free, to all verified owners of the sites.

- Take advantage of the backlink data provided by additional third party services such as Open Site Explorer, Blekko, Open Link Profiler, SEO Kicks etc.

Note that most of the automated link audit tools aren’t very transparent about the data sources they’re using, nor about the percentage of data they are pulling in for processing.

Being in charge of the data to be analyzed will give you a big advantage and the more you increase the quantity and quality of your backlink data the better chances you will have to rectify the issues.

#5 Re-crawl all collected data

Now that have collected as much backlink data as possible, you now need to separate the chaff from the wheat. This is necessary because:

- Not all the links you have already collected may still be pointing to your site.

- Not all links pose the same risk e.g Google discounts no follow links.

All you need to do is crawl all backlink data and filter out the following:

-

Dead links – Not all links reported by Webmaster Tools, Majestic SEO, OSE and Ahrefs are still live as most of them were discovered weeks or even months ago. Make sure you get rid of URLs that do no longer link to your site such as URLs that return a 403, 404, 410, 503 server response. Disavowing links (or domains) that no longer exist can reduce the chances of a reconsideration request from& being successful.

-

Nofollow links – Because nofollow links do not pass PageRank nor anchor text, there is no immediate need trying to remove them – unless their number is in excess when compared to your site’s follow links or the follow/nofollow split of your competitors.

Tip: There are many tools which can help crawling the backlink data but I would strongly recommend Cognitive SEO because of its high accuracy, speed and low cost per crawled link.

#6 Identify the authentic URLs

Once you have identified all live and follow links, you should then try identifying the authentic (canonical) URLs of the links. Note that this step is essential only in case you want to try to remove the toxic links. Otherwise, if you just want to disavow the links you can skip this step making sure you disavow the entire domain of each toxic-linking site rather than the specific pages linking to your site.

Often, a link appearing on a web page can be discovered and reported by a crawler several times as in most cases it would appear under many different URLs. Such URLs may include a blog’s homepage, category pages, paginated pages, feeds, pages with parameters in the URL and other typical duplicate pages.

Identifying the authentic URL of the page where the link was originally placed on (and getting rid the URLs of all other duplicate pages) is very important because:

- It will help with making reasonable link removal requests, which in turn can result in a higher success rate. For example, it’s pretty pointless contacting a Webmaster and requesting link removals from feeds, archived or paginated pages.

- It will help with monitoring progress, as well as gathering evidence for all the hard work you have carried out. The latter will be extremely important later if you need to request a review from Google.

Example 1 – Press release

In this example the first URL is the “authentic” one and all the others ones need to be removed. Removing the links contained in the canonical URL will remove the links from all the other URLs too.

Example 2 – Directory URLs

In the below example it isn’t immediately obvious on which page the actual link sits on:

http://www.192.com/business/derby-de24/telecom-services/comex-2000-uk/18991da6-6025-4617-9cc0-627117122e08/ugc/?sk=c6670c37-0b01-4ab1-845d-99de47e8032a (non canonical URL with appended parameter/value pair: disregard)

http://www.192.com/atoz/business/derby-de24/telecom-services/comex-2000-uk/18991da6-6025-4617-9cc0-627117122e08/ugc/ (canonical page: keep URL)

http://www.192.com/places/de/de24-8/de24-8hp/ (directory category page: disregard URL)

Unfortunately, this step can be quite time-consuming and I haven’t as yet come across an automated service able to automatically detect the authentic URL and instantly get rid of the redundant ones. If you are aware of any accurate and reliable ones, please feel free to share examples of these in the comments 🙂

#7 Build your own link classification model

There are many good reasons for building your own link classification model rather than relying on fully automated services, most of which aren’t transparent about their toxic link classification formulas.

Although there are many commercial tools available, all claiming to offer the most accurate link classification methodology, the decision whether a link qualifies or not for removal should sit with you and not with a (secret) algorithm. If Google, a multi-billion dollar business, is still failing in many occasions to detect manipulative links and relies up to some extent on humans to carry out manual reviews of backlinks, you should do the same rather than relying on a $99/month tool.

Unnatural link signals check-list

What you need to do in this stage is to check each one of the “authentic” URLs (you have identified from the previous step) against the most common and easily detectable signals of manipulative and unnatural links, including:

- Links with commercial anchor text, including both exact and broad match.

- Links with an obvious manipulative intent e.g. footer/sidebar text links, links placed on low quality sites (with/without commercial anchor text), blog comments sitting on irrelevant sites, duplicate listings on generic directories, low quality guest posts, widget links, press releases, site-wide links, blog-rolls etc. Just take a look at Google’s constantly expanding

link-schemes page for the entire and up-to-date list.

- Links placed on authoritative yet untrustworthy websites. Typically these are sites that have bumped up their SEO metrics with unnatural links, so they look attractive for paid link placements. They can be identified when one (or more) of the below conditions are met:

- MozRank is significantly greater than MozTrust.

- PageRank if much greater than MozRank.

- Citation flow is much greater than Trust Flow.

- Links appearing on pages or sites with low quality content, poor language and poor readability such as spun, scraped, translated or paraphrased content.

- Links sitting on domains with little or no topical relevance. E.g. too many links placed on generic directories or too many technology sites linking to financial pages.

- Links, which are part of a link network. Although these aren’t always easy to detect you can try identifying footprints including backlink commonality, identical or similar IP addresses, identical Whois registration details etc.

- Links placed only on the homepages of referring sites. As the homepage is the most authoritative page on most websites, links appearing there can be easily deemed as paid links – especially if their number is excessive. Pay extra attention to these links and make sure they are organic.

- Links appearing on sites with content in foreign languages e.g. Articles about gadgets in Chinese linking to a US site with commercial anchor text in English.

- Site-wide links. Not all site-wide links are toxic but it is worth manually checking them for manipulative intent e.g. when combined with commercial anchor text or when there is no topical relevance between the linked sites.

- Links appearing on hacked, adult, pharmaceutical and other “bad neighborhood” spam sites.

- Links appearing on de-indexed domains. Google de-indexes websites that add no value to users (i.e. low quality directories), hence getting links from de-indexed websites isn’t a quality signal.

- Redirected domains to specific money-making pages. These can include EMDs or just authoritative domains carrying historical backlinks, usually unnatural and irrelevant.

Note that the above checklist isn’t exhaustive but should be sufficient to assess the overall risk score of each one of your backlinks. Each backlink profile is different and depending on its size, history and niche you may not need to carry out all of the aforementioned 12 checks.

Handy Tools

There are several paid and free tools that can massively help speeding things up when checking your backlinks against the above checklist.

Although some automated solutions can assist with points 2, 4, 5, 8 and 10, it is recommended to manually carry out these activities for more accurate results.

Jim Boykin’s Google Backlink Tool for Penguin & Disavow in action

#8 Weighting & aggregating the negative signals

Now that you have audited all links you can calculate the total risk score for each one of them. To do that you just need to aggregate all manipulative signals that have been identified in the previous step.

In the most simplistic form of this classification model, you can allocate one point to each one of the detected negative signals. Later, you can try up-weighting some of the most important signals – usually I do this for commercial anchor text, hacked /spam sites etc.

However, because each niche is unique and consists of a different ecosystem, a one-size-fits-all approach wouldn’t work. Therefore, I would recommend trying out a few different combinations to improve the efficiency of your unnatural link detection formula.

Sample of weighted and aggregated unnatural link signals

Turning the data into a pivot chart makes it much easier to summarize the risk of all backlinks in a visual way. This will also help estimating the effort and resources needed, depending on the number of links you decide to remove.

#9 Prioritizing links for removal

Unfortunately, there isn’t a magic number (or percentage) of links you need to remove in order to rebalance your site’s backlink profile. The decision of how much is enough would largely depend on whether:

- You have already lost rankings/traffic.

- Your site has been manually penalized or hit by an algorithm update.

- You are trying to avoid a future penalty.

- Your competitors have healthier backlink profiles.

No matter which the case is it makes common sense to focus first on those pages (and keywords), which are more critical to your business. Therefore, unnatural links pointing to pages with high commercial value should be prioritized for link removals.

Often, these pages are the ones that have been heavily targeted with links in the past, hence it’s always worth paying extra attention into the backlinks of the most heavily linked pages. On the other hand, it would be pretty pointless spending time analyzing the backlinks pointing at pages with very few inbound links and these should be de-prioritized.

To get an idea of your most important page’s backlink vulnerability score you should try Virante’s

Penguin Analysis tool.

#10 Defining & measuring success

After all backlinks have been assessed and the most unnatural ones have been identified for removal, you need to figure out a way to measure the effectiveness of your actions. This would largely depend on the situation you’re in (see step 3). There are 3 different scenarios:

- If you have received a manual penalty and have worked hard before requesting Google to review your backlinks, receiving a “Manual spam action revoked” message is the ultimate goal. However, this isn’t to say that if you get rid of the penalty your site’s traffic levels will recover to their pre-penalty levels.

- If you have been hit algorithmically you may need to wait for several weeks or even months until you notice the impact of your work. Penguin updates are rare and typically there is one every 3-6 months, therefore you need to be very patient. In any case, recovering fully from Penguin is very difficult and can take a very long time.

- If you have proactively removed links things are vaguer. Certainly avoiding a manual penalty or future algorithmic devaluations should be considered a success, especially on sites that have engaged in the past with heavy unnatural linking activities.

Marie Haynes has written a very thorough post about

traffic increases following the removal of link-based penalties.

Summary

Links may not always be the sole reason why a site has lost rankings and/or organic search visibility. Therefore before making any decision about removing or disavowing links you need to rule out other potential reasons such as technical or content issues.

If you are convinced that there are link based issues at play then you should carry out an extensive manual backlink audit. Building your own link classification model will help assessing the overall risk score of each backlink based on the most common signals of manipulation. This way you can effectively identify the most inorganic links and prioritize which ones should be removed/disavowed.

Remember: All automated unnatural link risk diagnosis solutions come with many and significant caveats. Study your site’s ecosystem, make your own decisions based on your gut feeling and avoid taking blanket approaches.

…and if you still feel nervous or uncomfortable sacrificing resources from other SEO activities to spend time on link removals, I’ve recently written a post highlighting the reasons why link removals can be very valuable, if done correctly.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don’t have time to hunt down but want to read!

Continue reading →

The most important invention in the history of humankind is : COOKING! Suzana Herculano-Houzel: What is so special about the human brain? Intelligence is the ability of the organism to expand future options: Alex Wissner-Gross: A new equation for intelligence We all love to feel and touch. A step forward into making human-machine interaction more […] Continue reading →

The most important invention in the history of humankind is : COOKING! Suzana Herculano-Houzel: What is so special about the human brain? Intelligence is the ability of the organism to expand future options: Alex Wissner-Gross: A new equation for intelligence We all love to feel and touch. A step forward into making human-machine interaction more […] Continue reading →